Physics informed algorithms for sensing and navigating turbulence

Imagine you are surrounded by water. There is something you need to reach. You cannot really see your target, but you know it is moving, because your skin senses the currents it generates. You also know it cant be far, because you smell its odor. How can you reach your target? What are the algorithms that guide sensory driven navigation, in a world dominated by turbulence? Living systems solve this problem routinely to find food and mates or escape predators and many animals are exquisitely adapted to navigate turbulence. But what are the algorithms they use to:

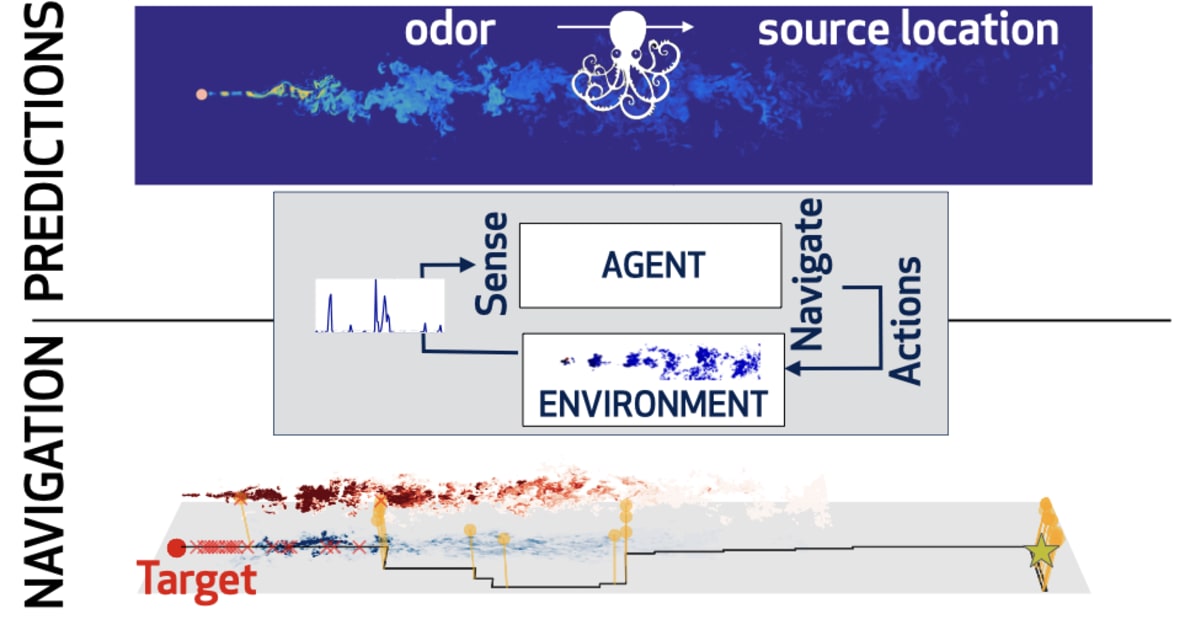

combine prediction with navigation?

adapt to a changing physical environment

leverage multimodal sensory cues?

To tackle these questions we combine supervised learning with reinforcement learning using data from massive numerical simulations and asymptotic models of fluid dynamics.

Predictions

Organisms solve sophisticated olfactory problems even when odor cues are broken by turbulence. But (how) can an agent predict the position of a target using a short extract of the odor it releases? We developed supervised learning algorithms to demonstrate that measures of timing and intensity of odor detections enable olfactory predictions. However, to maintain predictive power, animals must rely on different measures as they move which -we predictwill be reflected in both behavior and neural representations of odor dynamics. The next question is: are the best predictors also the best features to support navigation?

Collaboration with

-

Lorenzo Rosasco - UniGe | MalGa

-

Nicodemo Magnoli - UNIGE

Navigation

We develop reinforcement learning algorithms adapted to turbulent navigation, a paradigmatic example of decision making under uncertainty. In our recent work we use the framework of partially observable Markov Decision Processes to clarify why animals often alternate between sniffing the ground and the air while tracking scents. We are currently studying the foundations of these algorithms in the context of olfactory navigation to optimize their performance and extend the results to other multimodal sensory cues and a changing physical environment.

Collaboration with

-

Massimo Vergassola - CNRS, Ecole Normale Superieure

-

Gautam Reddy - Harvard University